MarioGPT Leads AI-Generated Future Where We Play Nintendo Forever

There can never be excessive Mario in the world. It has been a while since you played one of the original NES games. However, probably because they are very familiar to you. What would happen if we told you that the research scholars had created a way to generate infinite Mario levels using MarioGPT? So you can play a new one every day on MarioGPT like Nintendo forever?

A team at the IT University of Copenhagen has just published a paper (pre-publish) and a GitHub page showing a new coding method. It has generated Super Mario Bros levels. They call it MarioGPT, where we can forever play ‘Nintendo’ permanently.

GPT-2 is the basis for MarioGPT, but not one of these newfangled conversational AIs. These big language models are good at not just taking words in sentences like these and pulling out more like them: they are general-purpose pattern recognition and replication machines.

In an email, the paper’s lead author said they chose the smallest to see if it would work! He thinks GPT2 is more suitable with small data sets than GPT3 while also being much lighter and easier to train. However, larger ‘data sets’ and ‘more complicated prompts’ exist. We may require to utilize a more sophisticated model like GPT3.

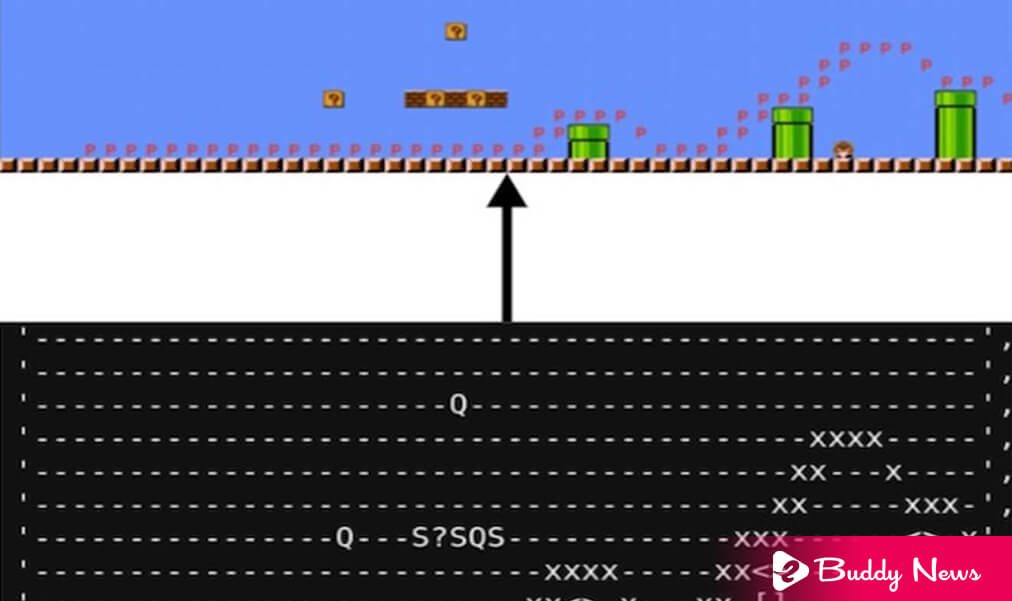

Even a very large LLM will not understand Mario’s levels natively. Hence, the research fellows must first generate a set of them as ‘text.’ At the same time, they produce a sort of Dwarf Fortress version of Mario that would, honestly, play: Do you want to earn money? Just say. Mario is in the terminal.

Once they represent the level as an ordinary character string, it can be ingested by the model in the same way as any other character string, whether in written language or code. And once you understand the patterns that map to features, you can reproduce them.

Its output includes a ‘path’ represented as a lowercase x, showing that the level is technically playable. Users found that out of 250 levels, the game software agent A* could complete nine out of 10.

We should know that ‘High novelty’ and ‘interesting path’ trajectories mean doable levels which do not look like existing ones but do not let the player just run through them. Of course, that would only be a success if the levels were flat with the occasional pipe to clear. But they included some functions to measure the simple path and compare it against the data set levels.

Tagged input also made it so the model could understand natural language prompts, like asking it to do a level with ‘lots of pipes and lots of enemies’ or ‘lots of blocks, high elevation, no enemies.’

One limitation is that it is due to how they encode their source data in the Game Level Corpus; there is only one symbol for ‘enemy’ instead of one for goombas, koopas, etc. But this can be changed if necessary – the concept they needed to test was that we could generate good levels. Unfortunately, the water levels are also currently impossible because they must have their representation in the dataset.

The paper’s author said that we would explore richer data sets in future work!

Another group at NYU GameLab prepared a paper showing a similar process for ‘Sokoban’ or ‘block-pushing puzzle games.’ The principles are the same. However, we can read about the contrasts.

The fact that these approaches worked for two genres suggests they could work for others of similar complexity without spawning an infinite Chrono Trigger. Still, an AI-powered 2D Sonic is possible.

It is not the first ‘Mario’ generator we have seen. Others tend to rely on something other than a generative AI but rather on assembling levels from pre-made sequences and tilesets. So you can get a new sequence, but it won’t be original tile by tile, just screen by screen.

Like the first version of MarioGPT, this one is purely experimental and will avoid the Sauron gaze of Nintendo forever, which is known for pushing fan projects involving its properties. It is the charm of the original games in their difficulty and handcrafted themes, which takes more work to recreate. But of course, Infinite Mario sounds like fun.